Building an EU-Only AI Stack: Nextcloud MCP on Leaf.cloud

A journey through self-hosted LLMs, MCP integration challenges, and cost-effective observability

The promise is compelling: connect your personal knowledge base to AI assistants while keeping everything within EU borders. No data leaving the continent. Full control over your infrastructure. GDPR compliance by design.

We set out to build exactly this—a private AI stack running on EU-only infrastructure, integrating with Nextcloud for notes, files, and project management. Here's what we learned.

The Infrastructure

Leaf.cloud caught our attention as an EU-only cloud provider running managed Kubernetes via Gardener. They offer a two-week free tier for evaluation, which gave us time to properly test GPU workloads without upfront commitment.

Our test cluster:

2 worker nodes running

eg1.v100x1.2xlarge8 vCPU, 16GB RAM, 1x Nvidia V100 GPU (16GB VRAM) per node

Managed Kubernetes with automatic updates and built-in DNS/TLS via Gardener

The pricing is competitive for GPU instances:

| Instance | GPU | $/hr | $/month |

| eg1.v100x1.2xlarge | V100 16GB | $1.22 | ~$890 |

| eg1.a100x1.V12-84 | A100 80GB | $1.61 | ~$1,174 |

| eg1.h100x1.V24_96 | H100 | $4.12 | ~$3,006 |

For our 2-node V100 cluster: approximately $1,780/month at full utilization.

The Stack

Our architecture connects several components:

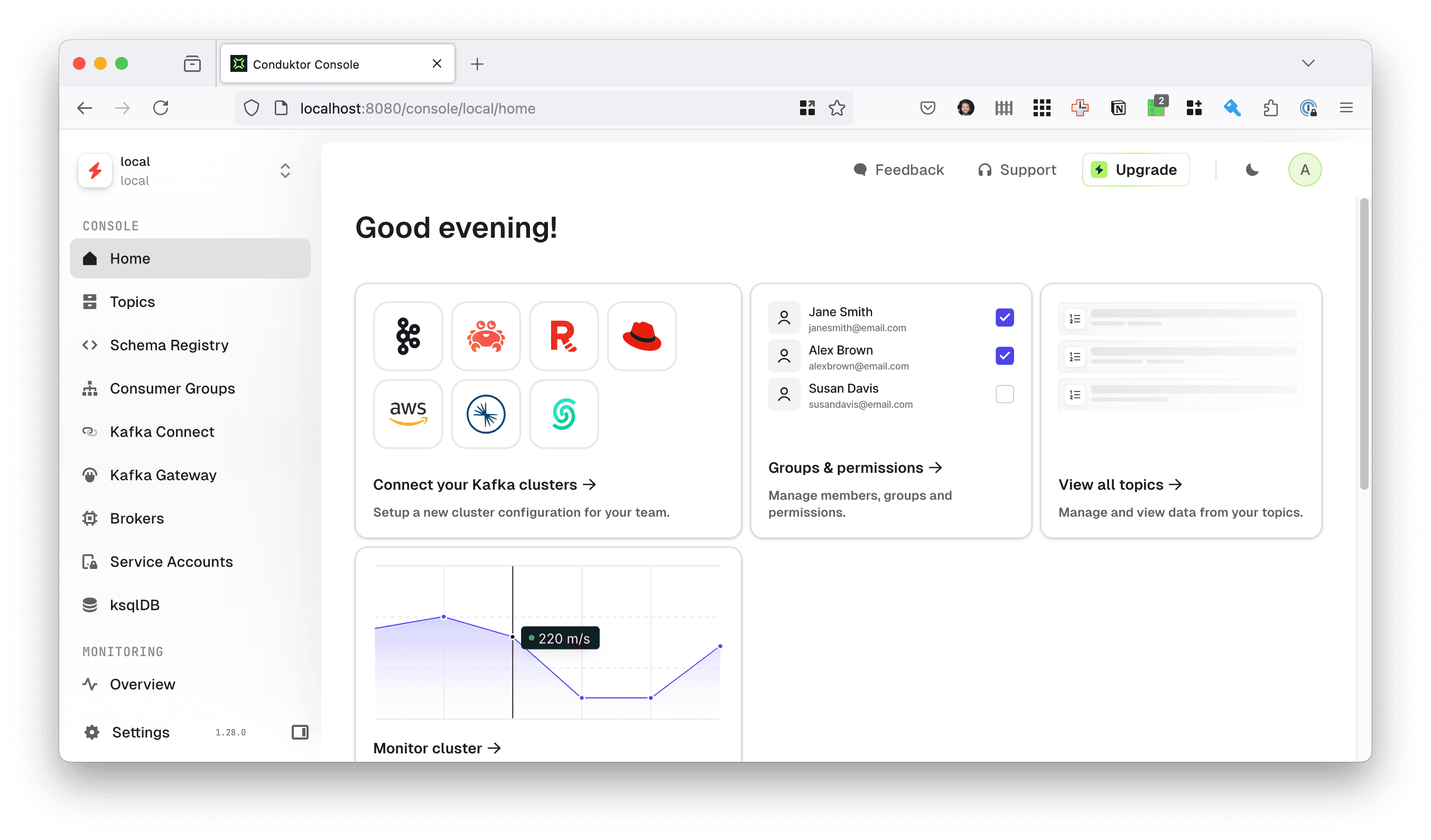

Open WebUI serves as our chat interface, chosen for its MCP client support and clean UI. It connects to Ollama running on the GPU nodes for local model inference.

The Nextcloud MCP Server bridges the gap between LLMs and Nextcloud APIs—exposing Deck boards, Notes, and WebDAV file operations as MCP tools that AI assistants can invoke.

The MCP Server

The Nextcloud MCP Server exposes several Nextcloud apps as MCP tools:

Deck - Kanban boards for project management

Notes - Markdown note-taking with categories

WebDAV - Full file system operations

Calendar - Event management (available but not enabled in our test)

Deployment is straightforward:

apiVersion: apps/v1

kind: Deployment

metadata:

name: nextcloud-mcp

namespace: ai

spec:

replicas: 1

template:

spec:

containers:

- name: mcp

image: ghcr.io/cbcoutinho/nextcloud-mcp-server:latest

command:

- /app/.venv/bin/nextcloud-mcp-server

- run

- --host

- 0.0.0.0

- --enable-app

- deck

- --enable-app

- webdav

- --transport

- streamable-http

envFrom:

- secretRef:

name: nextcloud-mcp-secret

The server uses streamable HTTP transport, making it accessible to MCP clients over the network.

The Context Overhead Problem

Here's where reality diverges from the ideal. With all tools enabled, the MCP server presents approximately 20,000 tokens of tool definitions to the LLM. This includes detailed schemas for every Deck operation (create board, create card, assign labels, move cards between stacks), every WebDAV operation (list, read, write, copy, move, search), and all Notes functionality.

For cloud LLMs with 100k+ context windows, this overhead is negligible. For local models running on a V100 with 16GB VRAM, it's a significant constraint.

Model Performance Reality

We tested a range of models through Ollama:

| Model | Size | Tool Use Reliability |

| mistral:7b | 7B | Unreliable with 20k context overhead |

| deepseek-r1:8b | 8B | Inconsistent tool selection |

| qwen2.5:14b | 14B | Better but still misses tool calls |

| deepseek-r1:14b | 14B | Moderate success rate |

| ministral-3:14b | 14B | Similar to qwen2.5 |

| gpt-oss:20b | 20B | Improved but not reliable |

| deepseek-r1:32b | 32B | Best local option, still imperfect |

Key findings:

Small models (7B-14B) struggle with the cognitive load of 60+ tool definitions. They often hallucinate tool names, miss required parameters, or fail to recognize when a tool should be used at all.

Larger models (32B+) perform better but still show inconsistency. The V100's 16GB VRAM limits which models we can run effectively—an A100 80GB would significantly expand our options.

Cloud LLMs (Claude, Mistral AI) handle the tool definitions without issue. They correctly identify when to use tools, select the right ones, and structure arguments properly.

This isn't a criticism of local models—they're impressive for their size. But MCP's design assumes LLMs can handle large tool catalogs gracefully, which is currently only reliable with frontier models.

MCP Client Limitations

Open WebUI supports MCP connections, but with significant limitations:

No MCP Sampling Support - The MCP specification includes a "sampling" feature that lets servers request LLM completions for sub-tasks. Open WebUI doesn't implement this, nor do other MCP clients like

claude-codeandgemini-cli, meaning the MCP server can only provide tools, not leverage the LLM for intelligent operations.Static Tool Listing - Tools are loaded once when the connection is established. There's no dynamic tool registration based on context or user needs.

No Tool Filtering - You can't selectively enable/disable tools per conversation or assistant.

The "App Expert" Workaround

To reduce context overhead and improve reliability, we found success with an App Expert pattern:

Instead of one assistant with all tools, create multiple specialized assistants:

Deck Expert - Only Deck tools enabled

Notes Expert - Only Notes tools enabled

Files Expert - Only WebDAV tools enabled

Each expert has a smaller tool set (~5-8k tokens instead of 20k), which smaller models handle more reliably. Users switch between experts based on their current task.

This works, but it's a workaround for what should be a protocol-level feature. The MCP specification supports dynamic tool sets, but clients need to implement it.

Observability on a Budget

Grafana Cloud's free tier provides:

1,500 samples/second ingestion rate

15,000 sample burst limit

Prometheus metrics, Loki logs, and basic dashboards

The challenge: a Kubernetes cluster generates thousands of metrics per scrape. Without filtering, we'd exceed the free tier immediately.

Our solution uses Grafana Alloy with aggressive metric filtering:

prometheus.relabel "cadvisor_filter" {

# Drop all histogram buckets (huge cardinality)

rule {

source_labels = ["__name__"]

regex = ".*_bucket"

action = "drop"

}

# Keep only essential container metrics

rule {

source_labels = ["__name__"]

regex = "container_(cpu_usage_seconds_total|memory_working_set_bytes|memory_usage_bytes|network_receive_bytes_total|network_transmit_bytes_total|fs_usage_bytes|fs_limit_bytes)|machine_(cpu_cores|memory_bytes)"

action = "keep"

}

# Drop kube-system containers to reduce noise

rule {

source_labels = ["namespace"]

regex = "kube-system"

action = "drop"

}

}

We apply similar filtering to node exporter, kubelet, and DCGM (GPU) metrics. The result: comprehensive visibility into what matters while staying within free tier limits.

Key metrics we kept:

GPU: utilization, memory usage, temperature, power consumption

Containers: CPU, memory, network I/O for our workloads

Nodes: CPU, memory, disk, network at the host level

MCP Server: Request rates and latencies

What the Metrics Revealed

We ran the POC over five working days, with the cluster auto-hibernating overnight and over weekends. This gave us clean data on actual usage patterns versus idle overhead.

Cluster Activity Windows:

| Day | Active Hours (CET) | Duration |

| Jan 26-30 | 08:25 - 16:55 | ~8.5 hrs/day |

Resource Utilization Summary:

| Metric | Idle | Peak (during inference) |

| GPU Utilization | 0% | 85% |

| GPU Power | 26-27W | 145.5W |

| GPU Temperature | 35°C | 69°C |

| Total AI Namespace Memory | ~1 GB | 7.1 GB |

| Ollama Memory (model loaded) | 15 MB | 5.3 GB |

MCP Server Performance:

| Metric | Value |

| Median latency (GET) | 175-212 ms |

| Median latency (POST/PUT) | ~175 ms |

| P95 latency | 244-470 ms |

| Error rate | 0% |

The V100 GPU was genuinely utilized—85% utilization during inference with power draw jumping from 27W idle to 145W. This confirms we weren't just burning GPU hours on CPU-bound work.

The Honest Assessment:

The infrastructure performed well. Zero errors across the five-day POC, sub-500ms API latencies, and efficient auto-hibernation. However, the observability data confirmed what we suspected from qualitative testing: smaller models struggled with MCP tool interactions due to context constraints.

With 20,000+ tokens of tool definitions competing for context space, models in the 7B-14B range frequently:

Failed to recognize when tools should be invoked

Hallucinated tool names or parameters

Lost track of multi-step operations

The 32B models showed improvement but still exhibited inconsistency. The V100's 16GB VRAM ceiling limits us to these smaller models—running a 70B parameter model that might handle the full tool catalog reliably would require an A100 80GB or H100.

Future Investigation:

A follow-up evaluation with an A100 instance ($1.61/hr vs $1.22/hr for the V100) would let us test whether larger models like deepseek-r1:70b or qwen2.5:72b can reliably handle the full MCP tool catalog. The 5x VRAM increase (80GB vs 16GB) opens up model sizes that may cross the threshold from "sometimes works" to "reliably works."

For now, the App Expert pattern (specialized assistants with reduced tool sets) remains the practical path for self-hosted deployments on V100-class hardware.

Lessons Learned

1. MCP Specification vs. Reality

The MCP specification is thoughtful and comprehensive. Client implementations are still catching up. Features like sampling, dynamic tools, and resource subscriptions exist in the spec but are rare in practice.

Recommendation for MCP server developers: Design for the lowest common denominator. Provide fewer, more focused tools rather than comprehensive coverage. Consider offering multiple tool "profiles" that clients can select.

2. Context Reduction Strategies

If you're building MCP servers:

Minimize tool descriptions - Every token counts for small models

Consolidate related operations - One

manage_cardtool with anactionparameter beats five separate toolsMake parameters optional with sensible defaults

Consider tool "tiers" - Basic tools always available, advanced tools on request

3. GPU Memory is the Constraint

For local LLM deployments, GPU VRAM determines what's possible more than compute. The V100's 16GB limits us to models that fit with room for context. The A100 80GB at only $0.40/hr more would dramatically expand model options.

4. EU Infrastructure is Viable

Leaf.cloud proved capable for this workload. Gardener-based Kubernetes "just works"—automated TLS via cert-manager, DNS management, and straightforward GPU scheduling. The two-week free trial is genuinely useful for evaluation.

Where This Goes Next

The pieces are almost there. We need:

Better MCP client implementations - Sampling support, dynamic tools, tool filtering

Smarter tool presentation - Lazy-load tool definitions based on conversation context

Smaller, more capable models - The gap between 14B and 70B models is closing

Quantization improvements - Running larger models in less VRAM

The dream of a private AI assistant that knows your notes, manages your projects, and respects your data sovereignty is achievable today—with the right model and some workarounds. It'll be seamless within a year or two.

Try It Yourself

The stack we tested:

Leaf.cloud - EU Kubernetes with GPU instances

Open WebUI - Chat interface with MCP support

Ollama - Local model serving

Nextcloud MCP Server - MCP bridge to Nextcloud

Grafana Alloy - Observability pipeline

Start with cloud LLMs (Claude, Mistral) for reliable tool use, then experiment with local models once your MCP server is working. And if you're building MCP clients or servers—please prioritize the sampling specification. The ecosystem needs it.

Questions or experiences to share? The Nextcloud MCP server is open source and welcomes contributions.